By Philip Mead

INSIGHTS Research

Our aim at Arabesque AI is to create customisable, actively managed, AI powered investment portfolios. To do so means it must be able to undertake any mandate and not specialise in one area. This generalisability is a core problem in AI research and has many dimensions; it can represent time, geography, or context. For example, in the time dimension you need your models to continue working from the pre- to a post- pandemic world (and during too). Geographically, you need to achieve performance anywhere from Peru to Russia and contextually, you may to apply to both start-ups and established companies. A generalisable AI can adapt to the environment it is in.

Our AutoCIO platform covers 25,000 stocks, in 60 countries and over 80 exchanges with data spanning back in time across many market regimes. AutoCIO allows you to create hyper-customised strategies and explore potentially millions of configurations depending on your investment aims. Creating such generalisation is a difficult task— but even more so in financial data— so what are some of the obstacles modelers face?

Covariate Shift

One problem which makes generalisation difficult is covariate shift. This is when, although the relationship between inputs and outputs remains the same, the distribution of your inputs changes. Imagine training a facial recognition device on spotless photos of you, perhaps headshots or dating profile pictures. When we attempt to generalise to your face first thing in the morning, pre-coffee, it might fail. Your face has not changed, but the context has, and so previously learned relationships may not hold.

Another example, given in this paper by researchers at Facebook, concerns the classification of images of cows and camels. In your training set the animals are in their natural environments; that is cows are in fields and camels in deserts. When we attempt to generalise to unnatural habitats, perhaps cows on beaches or camels in the park we fail spectacularly.

Cows on a beach may seem farfetched, but to those dealing in real world data far stranger things occur. You need to able to spot opportunities or risks however they are presented. Despite training across as wide array of environments as possible it is infeasible to innumerate all environments that will be encountered. The usual mantra of more data does not necessarily help as there are no guarantees the sample will contain the relevant information. Upon entering a new environment not seen before, there are fundamental limits to letting the ‘data talk’.

Changing Relationships

Covariate shift describes the process of when the context changes, but the input-output relationship does not. A more intractable problem is when the input-output relationship does change as is often the case with financial time series. In facial recognition this is the case of ageing, injury or plastic surgery, your mother may recognise you, but a naïve machine may not. For cows and camels this is perhaps the new breeds of super cows which more resemble Arnold Schwarzenegger than a typical Aberdeen Angus, recognisable to a farmer but not necessarily your classifier. The rules which you previously learned, whatever the context, may no longer hold.

In finance, rules change as markets move through volatility regimes, rate cycles, expansions, moderations, and contractions. However powerful your method is, the data you observe may be just the remnants of forgotten relationships. A seasoned trader who has survived for decades may recognise a market shift better than a machine trained only on recent data. This is the aim for AI also.

A New Approach

A pervasive problem in AI scenarios is lack of understanding of your input data and its context. In the cow and camel example, if say 90% of all pictures were taken in their natural habitat your machine may be able to minimise error by simply classifying anything in a green landscape as a cow and anything in a brown landscape as camel, with 90% accuracy. The problem is that you have not uncovered the causal mechanism that makes a cow a cow, you have identified a spurious correlation that will not generalise. Instead, we want to find robust patterns.

These problems are leading AI researchers to rediscover the power of structured models, driven by domain-specific knowledge. Understanding your data features, their context, structure and meaning can lead to more applicable results. The paper which contains the cow/camel example shows one example of this, an approach they call Invariant Risk Minimisation (IRM). In this approach the data is split into two environments – natural (N) and unnatural (U). Instead of having a model (like the cow-camel example above) that performs with 100% success in N and 0% in U (for a total of 90%) we instead encourage the learning of relationships that are stable across environments. We may end up with an accuracy of only 75% in each of N and U but we have forced the model to focus on those immutable characteristics which make a cow a cow wherever it is – invariance to environment signals that you have found a true causal relationship.

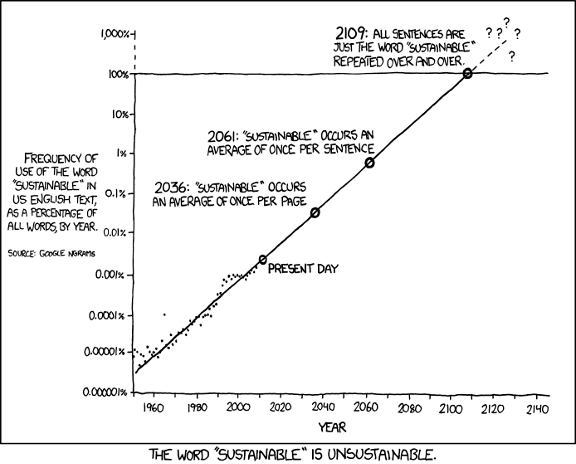

At Arabesque AI we continually research and update our models and when we do, we want to see improvements across all environments, meaning it is more likely we have found true relationships instead of spurious correlations. As a closing example consider another aspect of our business – sustainability. A company that has achieved great success by unsustainable (or simply corrupt) practices is unlikely to continue the performance you see in sample out of sample. However, applies even more as the rules of business change, behaviour that was profitable before may no longer work. Diverse, sustainable, and well-governed companies are better placed to survive across market scenarios, they are more invariant to the environment. Therefore, at Arabesque we believe that carefully constructed AI and sustainability will help to navigate through the environments which lay ahead.